“Operation Epic Fury” is a Marketing Term Powered by Ego and Potential AI Hallucinations, Not Military Reality

“Operation Epic Fury” is a Marketing Term Powered by Ego and Potential AI Hallucinations, Not Military Reality

The military campaign designated by Trump’s administration as “Operation Epic Fury,” initiated on February 28, 2026, by a joint coalition of United States and Israeli forces, represents a potentially multi-generational escalation of regional tensions in the Middle East.

Furthermore, this war presents the opportunity to focus on the military’s unprecedented reliance on LLM-based AI, which may have led to catastrophic outcomes.

During the first 24 hours of this military operation, the U.S. Central Command (CENTCOM) reportedly used Anthropic’s Claude for “intelligence assessments, target identification and simulating battle scenarios,” according to a report from The Wall Street Journal.

Claude AI had been deeply integrated into the Department of War’s classified networks and Palantir’s Maven Smart System.

The subsequent reported strikes on thirteen hospitals and the bombing of the Shajareh Tayyiba girls’ school in Minab must bring the reliability of these systems into sharp focus and scrutinise the role of algorithmic “hallucinations” in lethal decision-making, yet again.

The AI system reportedly generated approximately 1,000 “prioritised” targets on the first day alone, a volume that suggests the AI was not merely assisting humans but was potentially the primary engine of target production.

Currently, it is unknown whether any further human-led confirmations of these targets were made, considering the hallucination risk of LLM-based AI models.

By March 10, the total number of strikes had reportedly surpassed 5,000, a pace nearly double that of the 2003 “Shock and Awe” campaign in Iraq.

The Bombing of the Shajareh Tayyebeh Girls’ School

The most prominent example of potential algorithmic failure occurred on February 28, 2026, in Minab, Iran. A precision strike hit the “Shajareh Tayyiba” (The Good Tree) girls’ elementary school, killing at least 165 people, most of them children aged seven to twelve.

I noticed the media and the US-Israeli representatives suggesting the school was hit by a “failed rocket launch” by the IRGC. However, new video footage analysed by Bellingcat and CNN shows a munition striking the IRGC naval base adjacent to the school that is consistent with an American BGM-109 Tomahawk Land Attack Missile – a weapon the U.S. is the only known participant to possess.

The Pentagon has acknowledged that U.S. forces were the most likely perpetrators of the strike, although both the U.S. and Israel initially continued to state they were “investigating reports of civilian harm.”

Furthermore, Reuters has identified that the site was hit by a “double tap” – where two separate strikes take place approximately 40 minutes apart.

Other forensic analysis and satellite imagery released by Planet Labs showed multiple impact craters within the military complex, suggesting that while the targeting was “precise,” it either failed to account for the school’s proximity or misidentified the school building as part of the military infrastructure.

However, Al Jazeera’s investigation reveals that the school has been clearly separate from an adjacent military site for at least 10 years. Forensic analysts now suggest the strike may have been caused by “stale data.” While the building was part of an IRGC complex in 2013, satellite imagery from 2016 shows it walled off and converted into a civilian institution with murals and playgrounds.

It appears the AI systems may have hallucinated the school as a military target by processing outdated intelligence without human verification, leading to this deadly error.

The Systematic Targeting of Healthcare – The WHO Report

Beyond the Minab school bombing, the World Health Organization (WHO) has now verified thirteen attacks on healthcare facilities and various levels of the medical infrastructure in Iran and one in Lebanon. These strikes have reportedly killed four medics and injured at least 25 others.

One notable incident involved the Gandhi Hotel Hospital in northern Tehran, which suffered severe damage when a projectile hit an adjacent state television broadcast tower. Nurses were reportedly forced to flee the facility while carrying surviving infants.

The residents of Tehran described the bombardment as “indiscriminate,” with one resident stating, “We are becoming like Gaza.”

While we do not have a clear indication as to the level of AI reliance in this case, if decisions were made based on the system’s defined targets, then these incidents highlight a critical weakness that I have been discussing endlessly on The CEO Retort podcast and beyond.

Let me reiterate a point I have made many times over the years, based on my direct experience in developing AI models and deeptech businesses since 2002: AI models struggle to handle the real-world challenge of assessing and understanding “proportionality” and “collateral damage.”

An LLM like Claude may identify a military target, such as a communications tower. But its “reasoning” may not effectively prioritise the protection of an adjacent hospital, especially when tasked with maximising speed and military impact. No model operates in this capacity, yet it is being sold and used as if it can.

The AI-Powered Decision Making that Should Never Have Been

Certainly, the integration of AI into the United States military apparatus is not new. It has evolved through several distinct phases, moving from basic data processing to the sophisticated generative reasoning seen in early 2026.

But the foundation of the architecture in question was Project Maven, launched in 2017, which focused on using computer vision to identify targets in drone and satellite imagery.

By 2024, this framework had evolved into the Maven Smart System, managed by Palantir Technologies, which synthesised data from satellites, signals intelligence (SIGINT), and social media into a single interface for commanders.

Claude AI, developed by Anthropic, became a pivotal “reasoning engine” within this system. Unlike traditional AI, which might only flag a visual pattern, Claude’s LLM capabilities allowed it to process text-based intelligence reports, synthesise disparate data points, and provide prioritised target lists with specific GPS coordinates and weapons recommendations. Notice that none of these services is a decision.

By 2024, Claude was the only frontier AI model approved to operate on the Pentagon’s most sensitive Impact Level 6 (IL6) networks, making it the primary tool for the military’s most sensitive intelligence work.

Hence, the recent fallout from the Pentagon-Anthropic dispute has fundamentally altered the competitive landscape of defence AI. After refusing to allow Claude to be used for mass domestic surveillance or fully autonomous weapons, Anthropic was formally designated a “supply chain risk” by Defence Secretary Pete Hegseth.

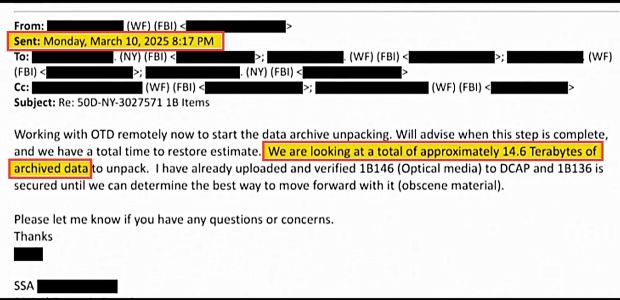

In response, Anthropic filed two lawsuits against the administration on March 9, 2026, characterising the move as an “unlawful campaign of retaliation.”

This stance has triggered unprecedented industry solidarity. Microsoft have filed a legal brief asking a judge to block the designation, and over 30 employees from OpenAI and Google DeepMind have filed an amicus brief in support of Anthropic.

Nonetheless, as Anthropic is phased out, OpenAI and its CEO, Sam Altman, moved quickly to secure a $200M deal for classified networks. However, the new contract has already come under fire for potential loopholes. While it prohibits “intentional” surveillance of U.S. citizens, legal experts warn it may still allow for incidental or unintentional data collection – the very terms Anthropic refused to accept.

Be reminded that all of the above took place within the current, so-called, red lines, which also played a role in the Venezuela military operation.

So, while the drama continues, the military is moving toward a race to the bottom, where companies that provide fewer restrictions are favoured over those that prioritise safety. So I cannot even begin to imagine what we will be witnessing in the coming weeks and months in terms of civilian casualties.

Humanitarian and Economic Ripple Effects

The human toll of this apparent algorithmic warfare is staggering. According to estimates from the US-based Human Rights Activists News Agency (HRANA), at least 1,262 civilians have been killed in Iran, including approximately 200 children. The strikes have displaced an estimated 100,000 people from Tehran and 80,000 in Lebanon.

The global economy is already feeling the shock, with the price of oil clocking its biggest single-day gain in six years, with Brent crude surging by more than 20% to $114 a barrel – at the time of this writing – as tensions flared in the Strait of Hormuz.

In response to the energy crisis, the U.S. Treasury was forced to issue a 30-day waiver allowing Indian refiners to buy Russian oil to stabilise the market.

This messy war demonstrates that, while models like Claude provide the speed and “reasoning” necessary for modern, high-intensity warfare, they also introduce fatal risks. The usual AI doomers like to talk about AI killing humans or believe in the imminent emergence of Skynet. Firstly, get out of Hollywood fantasy worlds.

Secondly, what we are witnessing here is a delusional belief in AI that has demonstrably highlighted the dull reality that may not prove to be a successful Hollywood box office-busting movie script: Humanity’s destruction will come from our collective failure in accepting the fact that the probabilistic logic of AI systems cannot govern life-and-death decisions.

Several high-profile researchers, such as Meta’s former Chief AI Scientist, Yann LeCun, frequently compare AI to four-year-olds to highlight the current limitations of AI. I’ve certainly been told the same since my early years at Google Campus, and I certainly do not agree with this analogy as a biomedical scientist, AI innovator and parent.

Four-year-old toddlers learn at a much faster and more efficient pace than any AI system. They do not need to study millions of cat photos to identify a cat! In fact, a recent study from Lancaster University has shown that young children beat AI models in learning new language skills, for example.

Nonetheless, what we are witnessing with the Iran war is more like four-year-old toddlers piloting the Titanic, with deadly consequences for potentially tens of thousands of civilian lives throughout the region. The question for me is very simple: Is this intentional, or criminal negligence?

Discussions

Sign-in to join the discussion.

Not a member yet? Consider joining us and become part of our mission of helping the world understand AI without bias or hype.